Thoughts from an Operations Wrangler; I lead the production engineering team, running one of the largest SaaS observability platforms on the planet. Wavefront started in 2013 (I joined in 2016) and was acquired by VMware in 2017. These thoughts are all mine.

I find myself more and more talking with Wavefront users – both internally and externally – on How Wavefront uses Wavefront to Wavefront.

Or, how Wavefront’s Production Engineers use Wavefront to run Wavefront.

And, in what I hope is a series of posts, I hope to go a bit deeper into how we do what we do.

At our core, as production engineers, Reliability is our product. Alerts are fundamental to that and the starting point.

why alerts?

We don’t look at dashboards until an alert tells us to. We don’t look at charts or create ad-hoc queries until an alert tells us to.

We generally aspire to sit beachside until an alert says otherwise.

Within Wavefront Operations we have a few truisms. Alerts are:

- always evolving

- actionable or informative (more on this later)

- any alert that pages is an alert that keeps us from the beach (and tacos)

An alert is the system telling us to go look at a thing. It’s the system telling us something important is outside of some definition of normal.

The majority of our alerts measure the rate of change of a metric or a change in the slope of a line or some other complicated math & science.

Data Ingester SQS Message Processing Variance Detected

variance(rate(ts(dataingester.sqs.processed, context=* and (tag="*-primary" or tag="*-secondary"))), hosttags, context) > 10

the evolution of an alert

Alerts start as

- a chart, exploring the data or patterns

- an alert where we test our hypothesis using Wavefront’s back testing

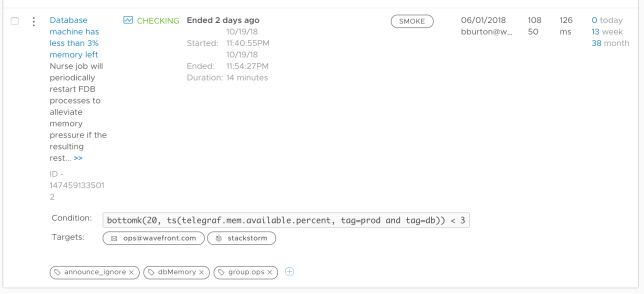

- an experimental alert, tagged with an alert tag path “experimental”, with an alert destination to anywhere but PagerDuty. It’s here where we refine the alert – it should not contribute to alert fatigue.

Eventually, we have a “production push” and the alert is live.

But we aren’t done until we have the alert automagically fixed through an integration with something like Jenkins or Stackstorm.

when an alert triggers there must be an action

We try to frame things as the “2am problem” – what am I willing to wake up for at 2am? There are many things for which I will and many more that I won’t.

When an alert fires it must have some action. And generally, we obsess about refining alerts such that when it fires, there is little to no debugging. Because in Wavefront, alerts & queries can be so precise, we evolve the alert such that it represents a singular action.

Singular actions → computer code → an alert that triggers a webhook → leave me along, I’m in bed sleeping.

alerts are actionable… sometimes

Not all alerts page out. When we do get a page we want to assimilate as much information as possible. As quickly as possible.

What else is going on in the system?

We use alerts to do that too. Alerts should also be informative. We label them as INFO or SMOKE but they help bring context. And since alerts are overlayed in Wavefront charts, we get even richer context.

We use alerts to do that too. Alerts should also be informative. We label them as INFO or SMOKE but they help bring context. And since alerts are overlayed in Wavefront charts, we get even richer context.

#BeachOps

Ultimately we want to be at the beach. And an alert that fires is an alert that keeps us away from the beach.

Everyone talks about “single pane of glass” but we use Wavefront as our First Pane of Glass, consolidating disparate metrics sources into single charts and into single alerts. You might call this full stack alerting — we call it #BeachOps.

We leverage Wavefront’s analytics engine and query language to build alerts that are actionable by a computer. Or by a human where we use Wavefront to provide as much context as possible. We also constantly evolve alerts. Taken together, these have helped prevent alert fatigue and keep the team size small while the infrastructure has grown by 400%.

And since Wavefront alerts can trigger actions in automation tooling like Jenkins or Stackstorm, we can spend our time at the beach. With tacos.